David Mountain1, David Anderson1, Glenn Bresnahan2, Andrew Brughera1, Socrates Deligeorges1, Allyn Hubbard1,3, David Lancia1, and Viktor Vajda1

1 Hearing Research Center, Boston University

2 Scientific Computing and Visualization group, Boston University

3 Dept. of Electrical and Computer Engineering, Boston University

Project Overview

The long-range goal of the EarLab project is to create realistic, large-scale computational models capable of predicting human auditory responses to a wide range of acoustic stimuli or environmental insults. Applications range from improved design of cochlear implants to explaining how humans are able to function in complex acoustic environments. The simulation architecture is designed to be extensible to other physiological systems or groups of systems.

EarLab simulations are based on interchangeable building blocks or modules. Each module represents a component of the physiological system being studied. The simulation modules are designed to be species independent with the species dependent parameters loaded from a parameter database at run time. Additional data and analysis modules may be included in the simulation to provide stimuli, e.g. sound sources, data collection, data analysis or visualization.

The system architecture provides a general framework for continuous-time simulation that is capable of exploiting parallel computing. The software may be run in a distributed heterogeneous computing environment with the individual modules running on different machines. The modules communicate with each other via a transport layer and the overall simulation is managed by the control layer. These two layers insulate the simulation modules from all of the synchronization, control and communication details. The current Earlab system contains both shared memory and network-based transports for running on a single machine or across a computational grid. Web, GUI and command-line interfaces are offered.

| Abbreviations | ||||||||||||||||||||||||||

|

||||||||||||||||||||||||||

Simulation Description

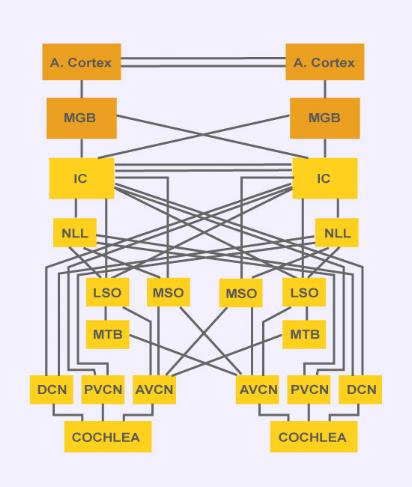

The model being demonstrated simulates the neural pathway involved in the localization of low frequency sounds in mammals. The model includes processing by the auditory periphery [1,2,3], the cochlear nucleus [4], and the medial superior olive (MSO) in the auditory brainstem [5]. In the auditory periphery, frequency-selective vibrations occur along the basilar membrane, and the inner hair cells convert these nanometer-scale vibrations into electrical signals that drive the neural impulses of the auditory nerve. These impulses tend to phase-lock to low-frequency auditory inputs. Bushy cells in the anteroventral cochlear nucleus (AVNC) preserve or enhance the phase-locking of their inputs from the auditory nerve, and relay these neural signals to the MSO. MSO neurons receive inputs from left and right cochlear nuclei, and have discharge patterns that are sensitive to the interaural time delay of the acoustic input, which is an important psychophysical cue for sound localization.

References

[1] Lopez-Poveda EA, Meddis R (2001). A human nonlinear cochlear filterbank. J Acoust Soc Am, 110(6):3107-18.

[2] Deligeorges S, Mountain DC (1997). Computational Neuroscience (A model for periodicity coding in the auditory system: 609-615). Bower, Ed. Plenum Press, New York.

[3] Gaumond RP, Molnar CE, Kim DO (1982). Stimulus and recovery dependence of cat cochlear nerve fiber spike discharge probability. J Neurophysiol, 48(3):856-73.

[4] Rothman JS, Young ED, Manis PB (1993). Convergence of auditory nerve fibers onto bushy cells in the ventral cochlear nucleus: implications of a computational model. J. Neurophysiol. 70(6), 2563-2583.

[5] Brughera AR, Stutman ER, Carney LH, Colburn HS (1996). A model with excitation and inhibition for cells in the medial superior olive. Auditory Neurosci. 2, 219-233.

Acknowledgments

This research is supported by NIDCD and NIMH, award DC04731.