Parallel performance and parallel algorithms

by Prof. L. Ridgway Scott

University of Chicago

Parallel performance

One of the goals in high-performance computing (HPC) is to achieve the best possible performance from parallel computers. But performance can be measured in a variety of ways; in HPC, we are generally interested in the speed at which floating-point computation is done. Thus, we use for example the units of giga-flop/s (a billion floating point operations per second).

A given computer will have a maximum floating point performance, and a given code can never exceed this figure. Often, it falls short by a vast amount, especially in parallel. It is much more difficult to achieve parallel performance than sequential performance, and it is also more difficult to measure. Factors that become critical are synchronization, load balance, and communication costs.

This part of the course will focus on parallel performance analysis; we will cover:

- performance measures

- limits to performance

- scalability

- load balancing

- real-world parallelism

Parallel algorithms

Central to the subject of parallel computing are algorithms. As a general rule, no parallelism of any significance occurs naturally; it must be created by removing dependencies. Often, entirely new algorithms have to be created to achieve a certain task in parallel.

One aspect in particular that requires efficient algorithms is collective operations. These are operations involving a group of processors to produce a single data structure or task result. For example, a barrier in shared memory computing, and a broadcast in message passing are collective operations.

In this part of the course, we will cover:

- collective operations: prefix and scan

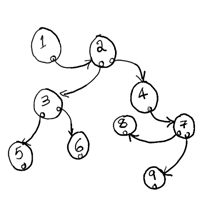

- tree/ring algorithms

- matrix-vector product: the power method

We will also discuss briefly some aspects of linear systems of equations, in particular their dependence analysis.

Supplementary material

- Scientific Parallel Computing, L. Ridgway Scott, Terry Clark, Babak Bagheri, Princeton University Press, 2005 [link for purchase]