Truths and Half-truths

An ecologist, an estuary, and a case study on communicating climate change

Ted Smayda was a 28-year-old assistant marine biologist, “just one of the rinky-dinks,” he says, when he first started gathering data on Rhode Island’s Narragansett Bay more than 50 years ago. Smayda chugged into the bay on the University of Rhode Island’s research boat, the Billie II, to a monitoring station near tiny Fox Island. There he collected water samples to measure levels of salt, nutrients, and plankton. He also measured the water’s temperature on the surface and at 5 and 10 meters deep. He kept up his weekly “Monday sample” for four decades, adding many more measurements over the years. His Phytoplankton Survey, as it came to be known, became one of the longest running and most valuable coastal data sets on phytoplankton growth conditions in the world.

From Smayda’s careful measurements eventually unspooled an unlikely climate change controversy involving a passionate Boston University ecologist, an outspoken US senator, and a prominent watchdog website. The controversy demonstrates how hard it can be to clearly communicate science to the public, especially when it involves a hot-button topic like climate change. Unpacking this episode offers a chance to examine how scientific data is collected and interpreted, and what can go wrong when the results go mainstream. It’s a story with no villains, but many lessons.

The senator and the scientist

From Ted Smayda’s first samples, fast-forward 50 years to April 9, 2013. On that day, US Senator Sheldon Whitehouse (D-R.I.) stood on the Senate floor and presented a 15-minute speech on the perils of climate change. This was familiar territory for the senator, who delivers one of his “Time to Wake Up” speeches every single week. The weekly speeches are a high-stakes move in Congress, where climate change skeptics—most famously Senator James Inhofe (R-Okla.)—argue that more environmental regulation will only hurt the US economy and put Americans out of work. Whitehouse stands clearly in opposition. The Huffington Post says that Whitehouse’s “ever-changing, ever-present floor speeches—warnings over rising sea levels, warmer oceans, eroding coastlines, and more—make him the Senate’s loudest, most persistent voice on the dangers of climate change.” They also make him a target for critics. Former Weather Channel meteorologist Herbert E. Stevens, for instance, writes frequent opinion pieces to the Providence Journal poking fun at “warmanistas” and picking apart Whitestone’s “misguided pronouncements.”

On this particular day, after sounding the alarm on rising sea levels and the dwindling winter flounder catch, Whitehouse offered a statistic that would spawn a controversy: “Narragansett Bay waters are getting warmer,” he said. “Four degrees Fahrenheit warmer in the winter since the 1960s.” The number came from a 2009 study published in Estuarine, Coastal and Shelf Science, citing temperature data collected between 1960 and 2006. The study was coauthored by Robinson W. (“Wally”) Fulweiler, a College of Arts & Sciences associate professor of earth and environment and of biology; the lead author was Scott Nixon, who had been Fulweiler’s thesis advisor. Fulweiler studies how nutrients like nitrogen and phosphorus flow from land into coastal waters and how humans have changed that flux through agriculture and development. In her work she examines things like phytoplankton blooms and sediment chemistry. “We spend a lot of time with our head in the mud,” she says. That 2009 study focused on decreasing phytoplankton growth in Narragansett Bay during the winter, related to warmer water and cloudier skies. The study included a plot of Smayda’s temperature data.

BU ecologist Robinson W. Fulweiler, whose work on Narragansett Bay spawned an unlikely climate change controversy. Photo by Melody Komyerov

A few weeks after the senator’s April 9 speech, PolitiFact Rhode Island, a partnership between the Journal and PolitiFact.com, evaluated Whitehouse’s claim. Politifact.com is an independent, Pulitzer prize–winning website that scrutinizes statements from politicians, advocacy groups, and other public figures and ranks them on its Truth-o-Meter somewhere between “true,” “false,” and the dreaded “pants on fire.” One of the Journal’s PolitiFact reporters, C. Eugene Emery, Jr., after examining and analyzing the temperature data himself and consulting with an ecologist not involved with the study, determined that the rise in temperature was closer to 2.5 degrees Fahrenheit. “This was off by enough that I had cause for concern,” he says. He drafted an analysis and three PolitiFact judges—all editors in the Journal newsroom—labeled the senator’s statement “half true,” which is defined on the PolitiFact website this way: “The statement is partially accurate but leaves out important details or takes things out of context.” Emery’s accompanying article noted that “the trend is certainly correct, but Whitehouse is too far off the Truth-o-Meter to register true. It is, pardon the pun, a matter of degree.”

But the reporter’s conclusion was half-true as well. He had inadvertently analyzed a different set of data than that used in the 2009 report, coming up with a different number. While the reporter’s analysis wasn’t necessarily incorrect (more on that later), by comparing the apples and oranges of two different data sets, it unfairly tainted the senator—and by association, the scientists—as dissemblers.

After the “half-true” story came out, Wally Fulweiler, the scientist, and Seth Larson, Whitehouse’s communications director, both spoke with the reporter separately. “It was frustrating, because we, and the senator, felt like his speech had accurately represented the findings of the Nixon article,” says Larson. “We argued our case to PolitiFact, but they ultimately made their own decision.” Reporter Emery says he also reached out to the ecologist he had previously consulted, the one not involved with the study, to clarify additional details, but did not hear back. He did not change the story.

Nixon, the lead author on the 2009 study, had died in 2012, and Fulweiler felt personally responsible for clearing his name. Scientists stake their careers on data: collecting it carefully, analyzing it methodically, and taking pains to omit any personal bias. The “half-true” label not only stung, but threatened reputations. “Scott Nixon was this wonderful man, and he didn’t do bad science,” says Fulweiler. “I felt like the reporter was implying that we did, and that bothered me because Scott wasn’t here to defend himself.” Instead of fuming in silence or firing off an angry op-ed, Fulweiler chose to fight back with the best weapon she had: peer-reviewed science. She and her colleagues turned the episode into a case study in scientific communication, which they published online in Estuarine, Coastal and Shelf Science in February 2015.

The plot

What happened on the road from data to debunker, and where did things go wrong? To understand that, it’s necessary to step back and look at a larger question: how exactly do scientists know that the oceans and coastal waters are getting warmer? Like Smayda at the beginning of this story, they collect data. Long-term data like the Phytoplankton Survey—collected over decades, rather than years—is especially valuable because it can help account for natural variability: a couple of uncannily cold summers or weirdly warm winters won’t disguise the overall trend. And that’s what climate scientists are looking for—significant, long-term trends. Although Smayda’s survey was mostly concerned with the growth and decline of phytoplankton species, the temperature data he collected has proven useful for other scientists studying the bay.

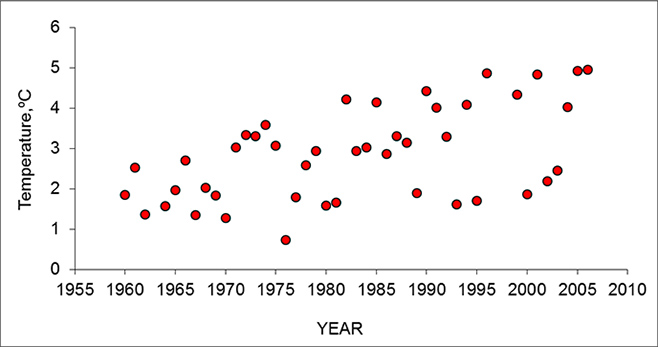

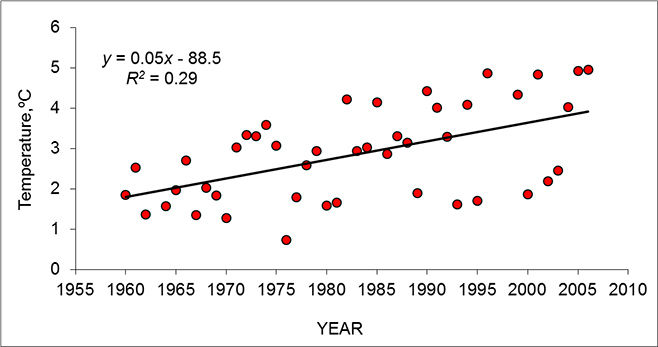

When scientists plot data on a graph, at first glance it sometimes makes no sense. Take a look at this data from the disputed 2009 study. It shows winter temperature measurements in Narragansett Bay between 1960 and 2006, taken from the Phytoplankton Survey. The years go only to 2006 because that was the most recent data available when Nixon, Fulweiler, and their colleagues completed their paper. Without analysis, the data points just look like a handful of red marbles scattered on the floor:

But then ask a statistician to average the data points, and if possible, draw a straight line that represents the overall trend. This is what you get:

This is called a linear regression analysis, a fundamental tool of statistics. “Linear” tells you that there’s a straight line. “Regression” is a term from statistics that means you’re looking at the relationship between two or more variables. And “analysis” indicates that the line is determined by standard mathematical equations and the rules of statistics, not by drawing a random line wherever you feel like it. This particular linear regression analysis shows an obvious trend: the water temperature in Narragansett Bay is going up.

When Emery, an experienced reporter who has covered science and medicine for almost 40 years, ran his own analysis, he did two things differently. First, he didn’t use temperature data from the Phytoplankton Study. Rather, he inadvertently took data from the Fish Survey, a separate long-running study in Narragansett Bay that gathers data on—you guessed it—fish. The two data sets are similar, but not identical, with the phytoplankton data typically collected in the morning and the fish survey in the afternoon. In addition, the reporter’s data set contained four additional years, 2006 to 2010. Emery did this intentionally: “I wanted to know the most recent data, not rely on an older paper,” he says. “This is a case where we did the best due diligence we could. I really wanted to get it right.” However, those four extra years happened to include a few especially cold winters. His analysis—not vetted by a statistician, a peer-reviewed journal, or any of the scientists involved in the 2009 study—showed that the winter waters of Narragansett Bay had warmed only about 2.5 degrees Fahrenheit.

Now comes the rebuttal. For their February 2015 paper Fulweiler and her coauthors laid out a statistical smorgasbord, analyzing data from the Phytoplankton Survey, the Fish Survey, and an automated National Oceanic and Atmospheric Administration (NOAA) buoy on the other side of the bay. Their intent was not to exactly match the Politifact reporter’s analysis. They had bigger goals: to update the record with all the data available, and demonstrate just how variable long-term temperature data can be.

Here’s what they found. Their linear regression analyses showed winter increases of 3.4 degrees Fahrenheit (Phytoplankton Survey), 2.9 degrees Fahrenheit (Fish Survey), and 3.6 degrees Fahrenheit (NOAA buoy). Hmmm, you say, scratching your head, those are a lot of different numbers. Which one is right? Here’s the point: they’re all right. This is the big lesson. While all those numbers appear to be different, because of the inherent margin of error in the data, they are basically identical to scientists and statisticians. It’s sort of like saying you weigh 180 pounds, but the average bathroom scale isn’t that precise: one may say 179.8 pounds, another may say 180.2. It’s all pretty much the same. As Fulweiler and her coauthors write, with their scientific precision, “These updated winter trends are not meaningfully different from one another when accounting for the model variability.” Or as fellow scientist Smayda says more colloquially: “Everyone agrees the bay is getting warmer. The differences are diddly.”

Smayda, now a professor at the University of Rhode Island Graduate School of Oceanography, makes another, critical point: that the temperature is only part of a larger, much more complex picture. “The ultimate question is, what is the impact on the biology and ecology of the bay? The change in temperature is just the frame for the picture.”

Scientists have collected three sets of long-term temperature data from Rhode Island’s Narragansett Bay; all of them show that it’s getting warmer. Photo by Doc Searls

Where does this leave Whitehouse? Although the data he quoted ended in 2006, the overall trend he noticed was certainly correct. Sure, his staffers could have downloaded more recent data and run their own analysis, just as the reporter did. But a statistical analysis run by a handful of political staffers would—with good reason—be viewed more skeptically than research published by an independent scientist in a peer-reviewed journal. The senator quoted the most recent peer-reviewed science available, says Fulweiler, and she thinks this was the right thing to do. “You can’t say that the senator is lying because his staffers haven’t downloaded and analyzed new data. That’s not their job, and it’s not appropriate,” she says. “That muddies the waters and makes a negative story where there isn’t one.”

“It was frustrating,” she adds. “There are so many things in science that are confusing and crazy and that we don’t know, and this isn’t one of them. This is something we know pretty well.”

Douglas Starr, codirector of the College of Communication Center for Science & Medical Journalism, says that such disconnects are becoming more common as we move into an era of Big Data journalism. “The tools of scientific analysis are becoming more and more accessible to smart, enterprising journalists,” says Starr (COM’83). Students in the Science Journalism Master’s program take a three-day “boot camp” to learn how to access and analyze data. But Starr emphasizes that journalists’ stats and spreadsheets cannot replace scientific expertise. “These are just the tools to get you to the story and help you ask better questions,” he says. “Then you have to bounce it off an expert.”

Fulweiler describes the Whitehouse episode as a missed opportunity for dialogue with the public. She hasn’t changed the way she’ll do science or communicate results in journals, but it’s made her think more about how data gets translated to the popular press. “I’m interested in why there seems to a ‘gotcha’ mentality in the press, instead of a ‘wait, let me understand what’s going on here.’” She thinks the popular press is important, but that scientists, journalists, and the public need to take more time to talk through findings together and really understand them. “There is a continued need for open communication between those who do the science and those who have a voice with which to share it,” she writes in the conclusion of her recent paper. “After all, we are all seeking the whole truth.”

This Series

Also in

Making Research Work

-

April 6, 2015

Who Picks Up the Tab for Science?

-

April 27, 2015

Too Many Postdocs, Too Little Funding

Comments & Discussion

Boston University moderates comments to facilitate an informed, substantive, civil conversation. Abusive, profane, self-promotional, misleading, incoherent or off-topic comments will be rejected. Moderators are staffed during regular business hours (EST) and can only accept comments written in English. Statistics or facts must include a citation or a link to the citation.