Could Taxes Deter the Spread of Harmful Fake News?

Questrom prof: economic theory about taxation could curb dissemination of misinformation

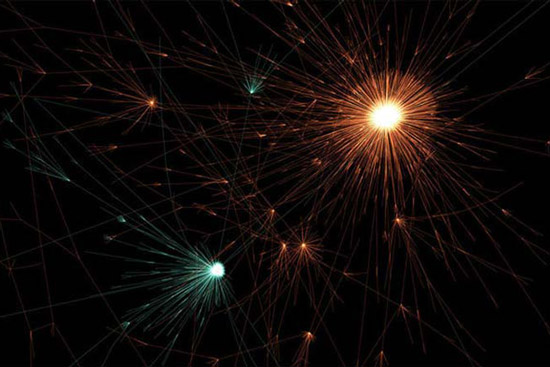

This visualization of Twitter activity shows how tweets about fake news (orange) spread farther than tweets about real news (teal). Credit: image, Peter Beshai; data, Soroush Vosoughi, Deb Roy, and Sinan Aral

Marshall Van Alstyne, a Boston University information economist, stands before a group of students; behind him is projected an image of teal and orange fireworks. Except they’re not fireworks—they’re data points plotting the diffusion of true and false news stories over the years.

The fake stories look like gigantic chrysanthemums, their fading tails bursting from a concentrated source. In comparison, the “true news” stories are smaller blips against the backdrop of the overwhelming and infinite web of our communication universe. The visualization, which Van Alstyne borrowed from a 2018 Science study for a recent presentation on his research, illustrates how stories of falsehood spread faster and more broadly than stories of truth “in all categories of information.”

Fake news invades our communications networks in many forms, Van Alstyne says. It can appear in the form of a story with intent to misinform or as an article lacking appropriate context despite good intentions. Sometimes, the term “fake news” can be applied too loosely, or worse, he says, co-opted by powerful figures in an effort to sow doubt in honest reporting.

“The simplest definition [of fake news] is information that causes harm at scale,” says Van Alstyne, a Questrom School of Business professor of information systems. “Fake news may be implicated in the last presidential election; fake news may be duplicated in Brexit. We see it in [the argument against vaccines]. This is such an enormous social problem with real social consequences…. It’s not a new problem, it’s old wine in a new bottle.”

Van Alstyne hopes to solve the complex problem of fake news through his research at the intersection of information systems and economics.

The main challenge is that fake news is particularly hard to combat. Fake news tenaciously holds on to the collective memory of those who have been exposed, he says. It spreads quickly with the help of social media (recall how quickly false reports on the identity of the Boston Marathon bomber spread on Twitter). It’s subversive, spreading on websites like 4chan, where users post anonymously. It is hard to disprove, as determining absolute truth is a challenge in and of itself. And finally, he says, there are no clear consequences for those who spread false information. Platforms such as Facebook, whose practices have been increasingly called into question, have historically taken no responsibility for the fake news shared on its feeds (although that’s finally beginning to change).

When fake news hurts real people

Van Alstyne’s concern is not fake news exchanged between two individuals, but the mass spread of misinformation at the societal level, which can lead to “negative externalities.” He explains that an “externality” is a consequence of some activity that affects other parties, particularly people who were not involved in the activity. Drunk driving deaths are an example of a negative externality that affects many individuals by no fault of their own, other than being in the path of an intoxicated driver.

Likewise, he believes fake news is contributing to negative externalities—and that fake news producers should be held accountable. The recent measles outbreak, which has now affected more than 880 people across multiple states, was in part due to the spread of fake news, he says. Parents decided not to vaccinate their children based on anti-vaccination literature on the internet and social media. As a result, a New York county recently declared a state of emergency and banned unvaccinated children from public spaces.

To deter fake news from spreading and hurting others, Van Alstyne proposes a radical idea borrowed from economics: a Pigouvian tax on negative externalities. Named after the English economist Arthur Pigou, this tax is commonly applied to deter negative behaviors, such as charging a carbon tax to companies that pollute the air or putting an extra tax on the cost of buying cigarettes. By financially penalizing those who choose to smoke, some may forgo the habit, thereby improving public health. And just as companies are taxed for the air pollution they create, he believes that producers of fake news should be taxed for the harm they cause beyond their platforms.

He says the harm fake news producers cause others—and the monetary value of that harm—could be decided by some independent body, comparable to a nonpartisan judicial body. “You could sample messages, find out some proportion of false and damning information, and then tax in proportion to the damage that’s being done,” he explains.

Van Alstyne insists, though, that this would not be a tax on free speech. “What you’re doing is you’re taxing the damage. You’re not taxing the speech,” he says.

Jay D. Wexler, a BU School of Law professor of law, finds this proposal problematic. Contrary to popular belief, free speech is not an absolute right, he says. “The government can prosecute people for making threats or inciting people to illegal activity. They can make reasonable time, place, [and] manner restrictions on how they can speak,” he says. A simple example is saying the word “bomb” at an airport; even if it’s a joke, you can get arrested for it.

“It’s clever to try to divorce the harm from the speech,” says Wexler, “but how do you know some independent body won’t determine at some point that what you say is causing harm? It’s still [leaving that distinction up to] some government actor, even if it was a federal judge who is presumably not influenced by politics.”

Curbing fake news at the source

Van Alstyne says another solution would be to “put friction” on the liars themselves, not just the lies they promulgate, and make it less likely for fake news to spread as quickly. This could take the form of curbing the source’s social network—either by temporarily suspending their account or limiting the number of people who can follow them. It’s not censoring free speech—rather it’s punishing them for the harm that their free speech causes. In effect, this takes away the liar’s metaphorical megaphone.

“This puts the incentive back on the liar to stop lying,” says Van Alstyne. “You don’t necessarily have a right to amplification. And if [the information you’re spreading is] provably false, you’re not going to be amplified.” He concedes, though, that it’s not a simple fix—hinting at the tension that exists between curbing an individual’s social network while also encouraging free expression.

Wexler says there is no first amendment issue with this proposal if social platforms, like Facebook or Twitter, will be the ones curbing the networks of their users. “There you have a private party…. It’s just a matter of whether it’s good policy for Twitter, for example.”

And Wexler is inclined to say that private platforms should make these decisions, because he believes it would be dangerous to give the government such authority. “Trying to jail people for expressing unpopular opinions about the war or political system…. I think that’s what we might end up with if [the government] were to [have] more robust authority to regulate speech,” he says.

Van Alstyne has also considered this difficulty in balancing free speech and harm. “On the one hand, free speech can lead to…increased justice, it can lead to whistle-blowing in cases of criminal activity. [On] the other hand, it can lead to Russian interference in elections.”

Perhaps the most realistic solution already exists. Currently, viewers can see who pays for a political advertisement, be it on television or radio. He proposes applying this same labeling mechanism to social media platforms.

“It’s something that I think probably needs tools from a couple of different disciplines to solve it,” he says. “I don’t think it can be solved with technology alone…. I don’t think it’d be solved by regulation alone. I think it’s going to require a combination of different things, but I think it’s a fascinating problem with big repercussions.”

Anastasia Lennon can be reached at aelennon@bu.edu.

Comments & Discussion

Boston University moderates comments to facilitate an informed, substantive, civil conversation. Abusive, profane, self-promotional, misleading, incoherent or off-topic comments will be rejected. Moderators are staffed during regular business hours (EST) and can only accept comments written in English. Statistics or facts must include a citation or a link to the citation.