SignStream® version 3 !*

A new Java version of SignStream® is available !

Get Version 3.5.1 - an update released in May of 2025.

Users of older versions are urged to upgrade to 3.5.1, for critical bug fixes and several new features!

This version works with MacOS Sequoia (15). There are enhancements to the Search feature, and some minor bug fixes in this version: http://www.bu.edu/asllrp/rpt27/asllrp27.pdf .

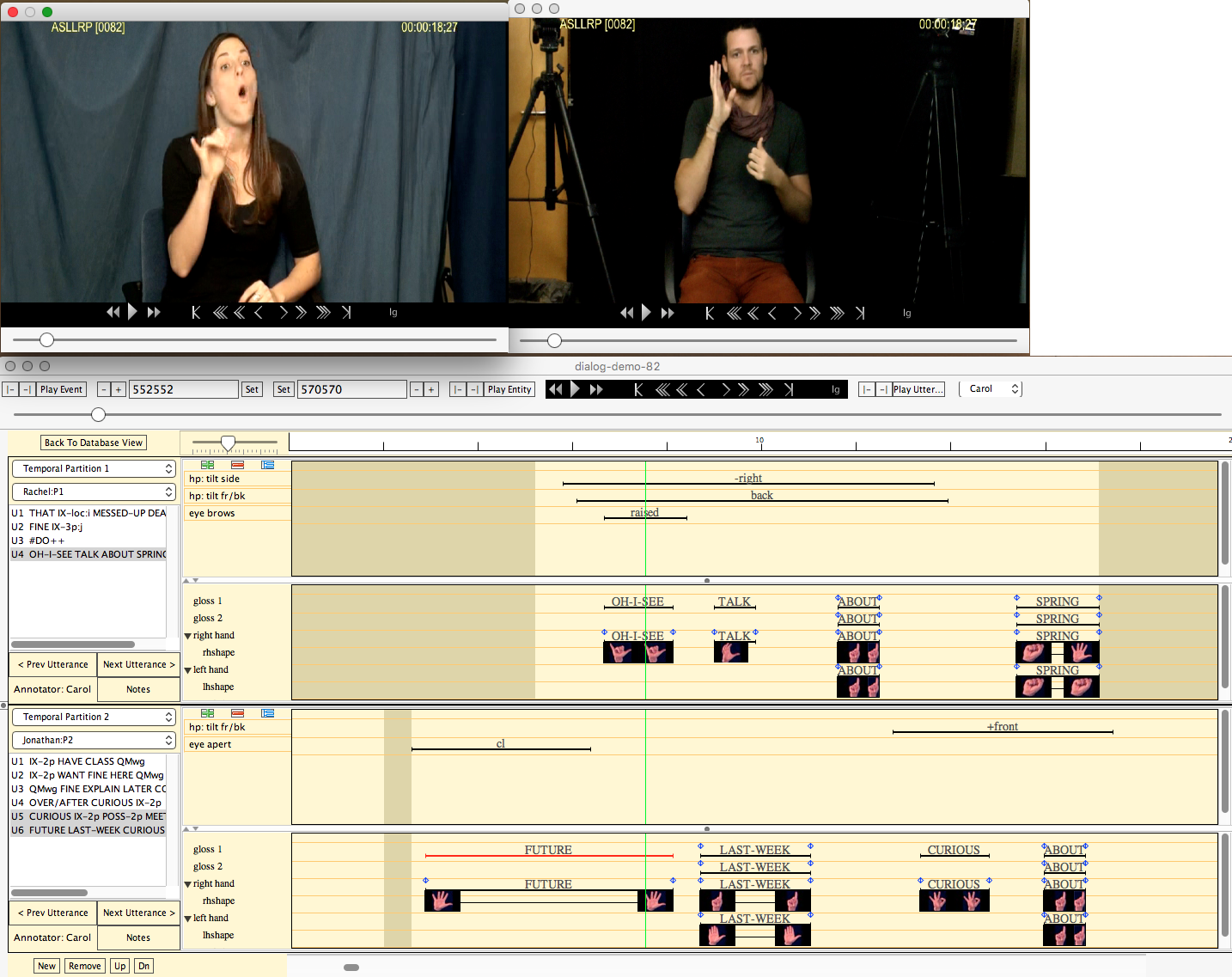

Designed to facilitate linguistic annotation and analysis of video data from American Sign Language (ASL).

System requirements:

- SignStream® only runs on Mac OS computers:

- macOS Sequoia (15), Sonoma (14), Ventura (13), Monterey (12), Big Sur (11), Catalina (10.15), or Mojave (10.14).

- SignStream® 3.5.1 may also work on older versions of the MacOS..

Download the latest version (3.5.1) of the software - and follow the instructions for installation carefully.

Click here for license information

IMPORTANT: If you are upgrading from a previous version of SignStream®, please note:

SignStream files that were last saved in a version of SignStream 3 prior to 3.3.0 cannot be opened with this current version of SignStream (3.5.1). To open such files, you will need to first open them with SignStream version 3.3.0 and save them; those saved versions will then be able to be opened with the current version of SignStream. If you do not have SignStream version 3.3.0 installed and need a link to download that version of the software for this purpose, please email Carol Neidle (carol@bu.edu) for a download link. (System requirements for version 3.3.0 will be detailed in the email reply.)

User guide: ASLLRP Report No. 15 Neidle, C. [2017]: A User's Guide to SignStream® 3

[pdf - 24 MB]

About the 3.1.0 update: ASLLRP Report No. 16 Neidle, C. [2018]: What's new in SignStream® 3.1.0 ?

[pdf - 1 MB]

About the 3.3 update: ASLLRP Report No. 17 Neidle, C. [2020]: What's new in SignStream® 3.3.0 ?

[pdf - 14 MB]

About the 3.4 update: ASLLRP Report No. 22 Neidle, C. [2022]: What's new in SignStream® 3.4.0 ?

[pdf]

About the 3.4.1 update: ASLLRP Report No. 23 Neidle, C. [2023]: What's new in SignStream® 3.4.1 ?

[pdf]

About the 3.5.0 update: ASLLRP Report No. 26 Neidle, C. [2024]: What's new in SignStream® 3.5.0 ?

[pdf]

About the 3.5.1 update: ASLLRP Report No. 27 Neidle, C. [2025]: What's new in SignStream® 3.5.1 ?

[pdf]

Video codecs that work with the application; if your video is in a different format, you should first convert it to one of these:

- MPEG-4 (.mp4, .mov)

- AVC1 (.mp4, .mov)

- H.264 (.mp4, .mov)

Gregory Dimitriadis Application Developer at Rutgers University (July 2015 - present) James Felice Application Developer at Rutgers University (October 2020 - 2021) Douglas Motto Application Developer Supervisor at Rutgers University (February 2016 - present) Charles Hedrick Director, LCSR, Rutgers University (2022 - present) Augustine Opoku Application Developer Supervisor at Rutgers University (July 2015 - January 2016) Thanks also to:

Jason Boyd, George Kierstein, Robert G. Lee, Joan Nash, John Olson, Stan Sclaroff, Ashwin Thangali, Christian Vogler, and Iryna Zhuravlova, for prior contributions to design and implementation; and to our linguistic consultants and to all those at BU and Gallaudet University who have used versions of the program, provided feedback and suggestions, and shared their expertise !

Partial list: : Justin Arrigo, Aimee Aylward, Ben Bahan, Carey Ballard, Cory Behm, Joshua Beckman, Rachel Benedict, Naomi Berlove Caselli, Elizabeth Cassidy, Lana Cook, Jaimee DiMarco, Daniel Ferro, Amanda Gaber, Graham Grail, Justin Bergeron, Chelsea Hammond, Ryan Hevia, Reema Kharoufa, Kelsey Koenigs, Alix Kraminitz, Corbin Kuntze, Yulia Labkovsky, Carly Levine, Rebecca Lopez, Jonathan McMillan, Travis Nguyen, Indya-Loreal Oliver, Caelan Pacelli, Braden Painter, Chrisann Papera, Emma Preston, Donna Riggle, Tyler Richard, Tory Sampson, Dana Schlang, Michael Schlang, Jessica Scott, Jonathan Suen, Norma Bowers Tourangeau, Blaze Travis, Amelia Wisniewski-Barker, Jennifer Witteborg, Norman Williams, Isabel Zehner

We also gratefully acknowledge:

contributions and assistance from the developers of the ASLLRP Data Access Interface (DAI, DAI 2), Christian Vogler and Augustine Opoku

support provided at Rutgers University by Dimitris Metaxas, Charles Hedrick, Charles McGrew, Hanz Makmur

support provided at Boston University by Stan Sclaroff, Ashwin Thangali, Vassilis Athitsos

support provided at Gallaudet University by Christian Vogler, Ben Bahan

those who contributed to development of prior versions of SignStream: David Greenfield (developer of versions through 2.2.2), Otmar Foeslche (developer supervisor), Ben Bahan, Judy Kegl, Robert G. Lee, Dawn MacLaughlin

Carol Neidle Project Director

*Development of SignStream®, our linguistically annotated corpora, and of our Data Access Interface (DAI and DAI 2), has been partially funded by grants from the National Science Foundation (NSF), including:

"NSF Convergence Accelerator [Phase I]--Track H: AI-based Tools to Enhance Access and Opportunities for the Deaf." [#2235405, D. Metaxas; C. Neidle; M. Huenerfauth]

"CHS: Medium: Collaborative Research: Linguistically Driven Sign Recognition from Continuous Signing for American Sign Language (ASL)." [#2212302, C. Neidle; 2212301, D. Metaxas; 2212303, M. Huenerfauth]

"NSF Convergence Accelerator [Phase I]--Track D: Data & AI Methods for Modeling Facial Expressions in Language with Applications to Privacy for the Deaf, ASL Education, and Linguistic Research." [#2040638, D. Metaxas; C. Neidle; M. Huenerfauth]

"CHS: Medium: Collaborative Research: Scalable Integration of Data-Driven and Model-Based Methods for Large Vocabulary Sign Recognition and Search." [#1763486, C. Neidle; 1763523, D. Metaxas; 1863529, M. Huenerfauth]

"EAGER: Collaborative Research: Data Visualizations for Linguistically Annotated, Publicy Shared, Video Corpora for American Sign Language (ASL)." [#1748016, C. Neidle; #1748022, D. Metaxas]

"HCC: Collaborative Research: Medium: Generating Accurate, Understandable Sign Language Animations Based on Analysis of Human Signing." [#IIS-1065013, C. Neidle; 10650090, M. Heunerfauth; 1054965, D, Metaxas]

"Medium: Collaborative Research: Linguistically Based ASL Sign Recognition as a Structured Multivariate Learning Problem." [#IIS-0964385, C. Neidle; #0964597, D. Metaxas]

“Collaborative Research: CI-ADDO-EN: Development of Publicly Available, Easily Searchable, Linguistically Analyzed, Video Corpora for Sign Language and Gesture Research.” [#1059218, C. Neidle, S. Sclaroff; #1059281, D. Metaxas; #1059221, B. Bahan, C. Vogler; # 1059235, V. Athitsos]

“HCC: Large Lexicon Gesture Representation, Recognition, and Retrieval” [#0705749, S. Sclaroff, C. Neidle]

“SignStream: A Multimedia Tool for Language Research.” [#9528985, C. Neidle]

Once the ASLLRP Sign Bank has been installed, SignStream® will check for Sign Bank updates each time SignStream® is launched. If an update is needed, a terminal window will open to enable the update to occur, after which the program itself will launch.

See also our online corpora:

The Data Access Interface (DAI) < https://dai.cs.rutgers.edu/dai/s/daioriginal> allows browsing, search, and download of ASL video data (linguistically annotated through use of SignStream®), for (a) the National Center for Sign Language and Gesture Resources (NCSLGR) corpus; and (b) ~10,000 examples (over 4,000 distinct signs) in citation form, as part of the American Sign Language Lexicon Video Dataset (ASLLVD), which forms the basis for the initial ASLLRP Sign Bank (see above). [See Carol Neidle and Christian Vogler, A New Web Interface to Facilitate Access to Corpora: Development of the ASLLRP Data Access Interface, 5th Workshop on the Representation and Processing of Sign Languages: Interactions between Corpus and Lexicon, LREC 2012, Istanbul, Turkey, May 27, 2012.]A new version of our Data Access Interface, DAI 2 (version 2), to faciliate, browsing, search, and download of data from our expanding corpora, annotated through use of SignStream® 3 (with more detailed morpho-phonological information than in previous datasets) is now available! For details, see http://www.bu.edu/asllrp/about-dai2.html. This interface currently allows access, to 47 collections from our new ASLLRP SignStream® 3 Corpus, with a total of over 2,000 utterances from 4 different signers (16,660 signeImportant Note:

Pop-ups must be enabled in your browser for the site to display the videos properly.

d examples). Additional data has recently been added from RIT: see http://www.bu.edu/asllrp/about-datasets.pdf. Here is an illustration of search results for (1) the sign glossed as "ALWAYS" (on top), and (2) negation (partial) in DAI 2:

The DAI 2 also provides access to our new ASLLRP Sign Bank !

See:

![]() Neidle, Carol, Augustine Opoku, Carey Ballard, Yang Zhou, Xiaoxiao He & Dimitris Metaxas. 2024. New Capability to Look Up an ASL Sign from a Video Example. arXiv 2407.13571 [cs.CV]. 1-11. https://arxiv.org/abs/2407.13571.

Neidle, Carol, Augustine Opoku, Carey Ballard, Yang Zhou, Xiaoxiao He & Dimitris Metaxas. 2024. New Capability to Look Up an ASL Sign from a Video Example. arXiv 2407.13571 [cs.CV]. 1-11. https://arxiv.org/abs/2407.13571.

![]() Carol Neidle and Augustine Opoku [2024] A Guide to the ASLLRP Sign Bank – New Search Features. BU ASLLRP Report No. 25, Boston, MA. http://www.bu.edu/asllrp/rpt18/asllrp25.pdf

Carol Neidle and Augustine Opoku [2024] A Guide to the ASLLRP Sign Bank – New Search Features. BU ASLLRP Report No. 25, Boston, MA. http://www.bu.edu/asllrp/rpt18/asllrp25.pdf

![]() Carol Neidle and Augustine Opoku [2020] A User's Guide to the American Sign Language Linguistic Research Project (ASLLRP) Data Access Interface (DAI) 2 — Version 2. BU ASLLRP Report No. 18, Boston, MA. http://www.bu.edu/asllrp/rpt18/asllrp18.pdf

Carol Neidle and Augustine Opoku [2020] A User's Guide to the American Sign Language Linguistic Research Project (ASLLRP) Data Access Interface (DAI) 2 — Version 2. BU ASLLRP Report No. 18, Boston, MA. http://www.bu.edu/asllrp/rpt18/asllrp18.pdf

Note that New data and new features are available as of Spring 2022:

see http://www.bu.edu/asllrp/about-datasets.pdf and http://www.bu.edu/asllrp/New-features-DAI2.pdf

See also:

![]() Carol Neidle, Augustine Opoku, and Dimitris Metaxas [2022] ASL Video Corpora & Sign Bank: Resource Available through the American Sign Language Linguistic Research Project (ASLLRP). arXiv:2201.07899 [cs.CL] https://arxiv.org/abs/2201.07899

Carol Neidle, Augustine Opoku, and Dimitris Metaxas [2022] ASL Video Corpora & Sign Bank: Resource Available through the American Sign Language Linguistic Research Project (ASLLRP). arXiv:2201.07899 [cs.CL] https://arxiv.org/abs/2201.07899

![]() Carol Neidle, Augustine Opoku, Gregory Dimitriadis, and Dimitris Metaxas [2018] NEW Shared & Interconnected ASL Resources: SignStream® 3 Software; DAI 2 for Web Access to Linguistically Annotated Video Corpora; and a Sign Bank, 8th Workshop on the Representation and Processing of Sign Languages: Involving the Language Community, LREC 2018, Miyazaki, Japan, pp. 147-154.

Carol Neidle, Augustine Opoku, Gregory Dimitriadis, and Dimitris Metaxas [2018] NEW Shared & Interconnected ASL Resources: SignStream® 3 Software; DAI 2 for Web Access to Linguistically Annotated Video Corpora; and a Sign Bank, 8th Workshop on the Representation and Processing of Sign Languages: Involving the Language Community, LREC 2018, Miyazaki, Japan, pp. 147-154.

Return to the American Sign Language Linguistic Research Project